Chat With Your Data: Using RAG to Query Interview Transcripts

Ask follow-up questions across thousands of interviews instantly. Learn how retrieval-augmented generation transforms research data into a searchable knowledge base.

Summary

Retrieval-Augmented Generation (RAG) transforms how researchers interact with interview data. Instead of manually searching through thousands of transcripts, researchers can ask natural language questions and receive answers backed by direct quotes. This article explains how RAG works, why traditional keyword search fails for qualitative data, how vector embeddings capture semantic meaning, and what makes research-specific RAG systems different from generic chatbots.

The Problem With Traditional Search

Keyword Search Breaks Down for Qualitative Data

Imagine you've conducted 500 customer interviews about a new product feature. You want to know: "How do users feel about the onboarding process?"

Traditional keyword search would require you to search for exact terms like "onboarding" or "setup" or "getting started." But participants don't use standardized vocabulary. They say things like:

- "The first time I opened the app, I was totally lost"

- "I couldn't figure out how to begin using it"

- "There wasn't any guidance when I started"

- "The initial experience was confusing"

None of these responses contain your search keywords, yet they're all talking about the same concept: poor onboarding.

The Manual Alternative Is Worse

Without semantic search, researchers resort to:

- Manual coding: Reading every transcript and tagging themes by hand

- Ctrl+F marathon: Searching dozens of synonym variations

- Spreadsheet chaos: Copy-pasting quotes into endless rows

- Memory dependence: "I remember someone said something about this..."

For a 50-interview study, this might take days. For 500 interviews, it's nearly impossible.

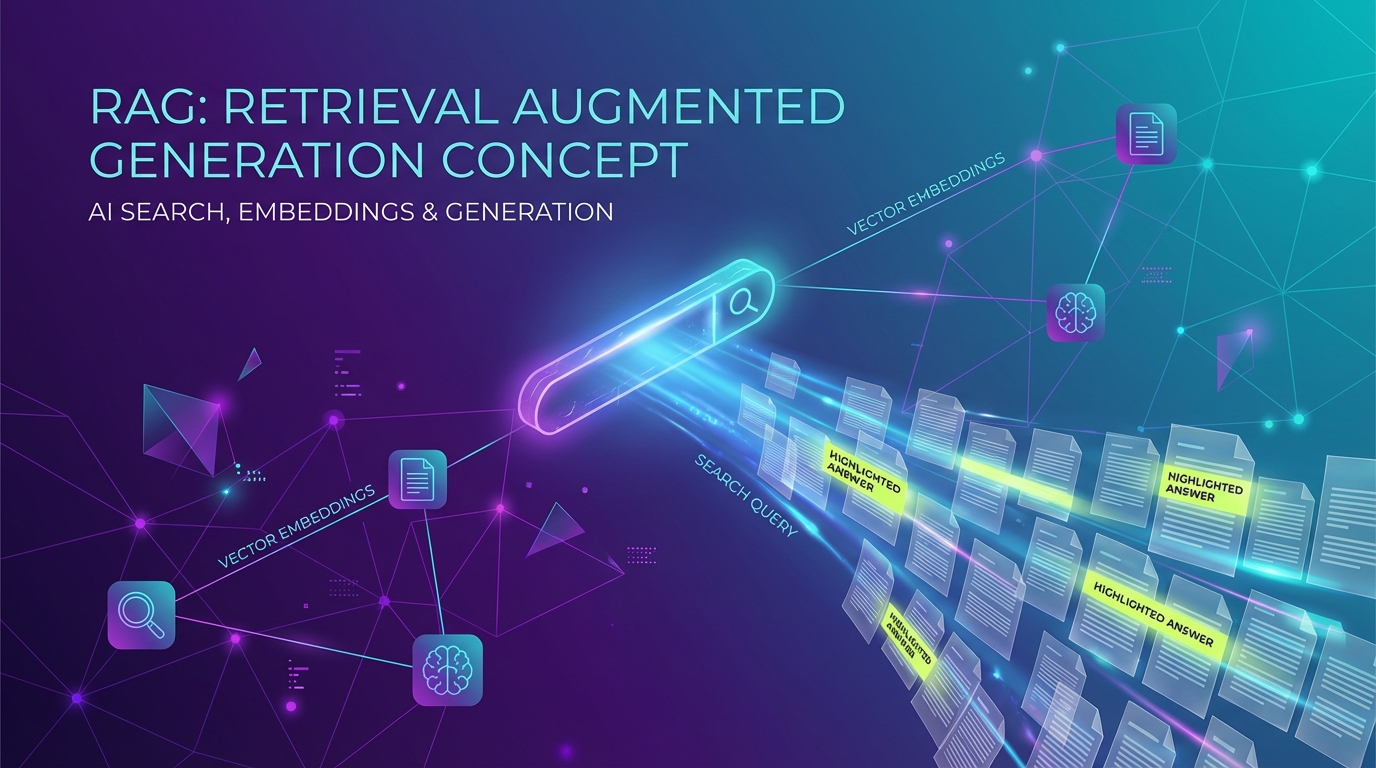

What Is RAG and How Does It Work?

The Basic Architecture

Retrieval-Augmented Generation combines two powerful capabilities:

- Retrieval: Finding relevant information from a large corpus of documents

- Generation: Using an AI language model to synthesize that information into coherent answers

Think of RAG as giving an AI assistant a filing cabinet of your research data. When you ask a question, the system:

- Converts your question into a mathematical representation (a vector embedding)

- Searches through all your interview transcripts to find semantically similar passages

- Retrieves the most relevant chunks of text

- Feeds those chunks to a language model along with your question

- Generates a natural language answer grounded in your actual data

Why This Matters for Research

The crucial difference between RAG and a generic chatbot: RAG can only answer based on your documents. It won't hallucinate information or pull from general internet knowledge. Every answer is traceable to specific interview transcripts.

Vector Embeddings: The Magic Behind Semantic Search

From Words to Mathematics

Vector embeddings are how computers understand meaning. Instead of matching exact words, embeddings capture semantic concepts.

Here's a simplified example. These sentences would be encoded as similar vectors even though they share no keywords:

"The app crashed when I tried to upload my photo"

"It stopped working during image submission"

"I couldn't attach pictures without the software freezing"

All three describe the same problem: a crash during photo upload. Embeddings recognize this similarity because they've learned from billions of text examples what concepts relate to each other.

How Embeddings Work in Practice

When you ingest interview transcripts into a RAG system:

- Chunking: Each transcript is split into meaningful segments (typically 500-1000 words with overlap)

- Embedding: Each chunk is converted into a high-dimensional vector (often 1536 dimensions)

- Indexing: These vectors are stored in a specialized database called a vector store

- Query encoding: When you ask a question, it's also converted to a vector

- Similarity search: The system finds chunks whose vectors are mathematically closest to your query vector

The beauty is that "closest" in vector space means "semantically similar" in human language.

Architecture of a Research-Specific RAG System

Core Components

A production RAG system for research data includes several specialized layers:

User Query

↓

Query Preprocessing (expand abbreviations, clarify ambiguity)

↓

Embedding Model (convert to vector)

↓

Vector Database (find similar chunks)

↓

Reranking (score relevance more precisely)

↓

Context Assembly (combine top passages)

↓

Language Model (generate answer with citations)

↓

Response with Source Quotes

What Makes Research RAG Different

Generic chatbot RAG optimizes for quick answers. Research RAG requires additional capabilities:

Citation tracking: Every claim must link back to specific interview IDs and timestamps

Multi-document synthesis: Instead of answering from one document, the system must aggregate patterns across hundreds of interviews

Verbatim preservation: Quotes must be exact, not paraphrased

Metadata filtering: Researchers need to filter by interview date, participant demographics, product version, or custom tags

Confidence scoring: The system should indicate when evidence is strong versus sparse

Example Queries and How the System Works

Query 1: Identifying Pain Points

Question: "What frustrations did users mention about our mobile app?"

How RAG Processes This:

- The embedding model recognizes "frustrations" relates to concepts like "annoying," "difficult," "problem," "issue"

- Vector search retrieves chunks mentioning negative experiences with the mobile app

- The language model synthesizes patterns:

Generated Answer: "Users reported three main frustrations with the mobile app:

-

Slow loading times (mentioned in 23 interviews)

- 'It takes forever to load my dashboard' (Interview #47)

- 'I've waited 30 seconds just to see my data' (Interview #132)

-

Confusing navigation (mentioned in 18 interviews)

- 'I can never find where they moved the settings' (Interview #203)

- 'The menu structure doesn't make sense' (Interview #88)

-

Battery drain (mentioned in 15 interviews)

- 'My phone dies twice as fast when this app runs' (Interview #291)

- 'It's a huge battery hog' (Interview #315)"

Notice how each finding includes the frequency count and direct quotes with interview IDs.

Query 2: Temporal Analysis

Question: "How have opinions about pricing changed between January and March?"

How RAG Processes This:

- Metadata filtering restricts search to interviews from those months

- Embedding captures sentiment around pricing

- The system compares patterns across timeframes

Generated Answer: "Pricing sentiment shifted noticeably between January and March:

January (mostly negative):

- 'It's way too expensive for what you get' (Interview #12, Jan 5)

- 'I'm considering switching to a cheaper alternative' (Interview #34, Jan 19)

March (more accepting):

- 'After using it for a while, the price makes sense' (Interview #156, Mar 8)

- 'It's expensive but worth it for the time savings' (Interview #178, Mar 22)

This suggests users need time to experience value before justifying the cost."

Query 3: Comparative Analysis

Question: "How do power users describe the product differently than casual users?"

This query requires the system to:

- Filter by user segment metadata

- Identify distinctive language patterns in each group

- Contrast the findings

Generated Answer: "Power users and casual users focus on completely different aspects:

Power users emphasize efficiency and customization:

- 'The keyboard shortcuts save me hours every week' (Interview #67, power user)

- 'I love that I can automate my entire workflow' (Interview #203, power user)

Casual users care more about simplicity and guidance:

- 'I just need it to work without thinking too hard' (Interview #88, casual user)

- 'The basic features are all I need' (Interview #134, casual user)

This suggests two distinct value propositions in your product."

Handling Multi-Language Transcript Search

The Challenge of Cross-Language Research

Modern research is increasingly global. You might have interviews in English, Spanish, Mandarin, and French — all discussing the same product.

How Modern Embeddings Handle Multiple Languages

Recent embedding models are trained on multilingual corpora, meaning they can represent concepts across languages in the same vector space. Practically, this means:

Same concept, different languages = similar vectors

"The checkout process is broken" (English)

"El proceso de pago está roto" (Spanish)

"结账流程有问题" (Mandarin)

All three would be encoded as similar vectors because they express the same semantic meaning.

Querying Across Languages

With a multilingual RAG system, you can:

- Ask questions in English and retrieve relevant passages in any language

- See answers that synthesize findings across all language groups

- Identify cultural differences in how concepts are discussed

Example query: "What payment methods do users request?"

Response might include:

- English interviews mentioning PayPal and Apple Pay

- Spanish interviews requesting bank transfers

- Mandarin interviews asking for Alipay and WeChat Pay

The system automatically aggregates across languages without requiring manual translation.

RAG for Research vs. Generic Chatbots

Different Goals, Different Architectures

| Feature | Generic Chatbot | Research RAG |

|---|---|---|

| Data source | General knowledge + maybe some docs | Only your interview transcripts |

| Answer style | Conversational and helpful | Analytical with evidence |

| Citation | Optional or vague | Required with exact quotes |

| Hallucination risk | High (will make things up) | Low (limited to document content) |

| Aggregation | Summarizes individual documents | Synthesizes patterns across hundreds |

| Metadata use | Rarely used | Critical for filtering and segmentation |

| Update frequency | Static knowledge cutoff | Real-time as interviews are added |

Why You Can't Just Use ChatGPT

While you could paste interview transcripts into ChatGPT, you'd run into several problems:

Context limits: ChatGPT has a token limit. You can't paste 500 full transcripts.

No citation tracking: It won't tell you which interview a quote came from.

No metadata filtering: You can't easily say "show me only interviews from Q1" or "compare enterprise vs SMB customers."

Hallucination risk: Generic models may embellish or make up details.

No persistence: Every new question requires re-uploading context.

Research-specific RAG solves all these issues with purpose-built architecture.

Building Trust in RAG Answers

The Transparency Problem

One legitimate concern with RAG: How do you know the AI isn't cherry-picking quotes or misrepresenting your data?

Best Practices for Trustworthy RAG

1. Always show source quotes: Every claim should link to verbatim text from transcripts

2. Show confidence scores: Indicate when evidence is strong (mentioned in 40 interviews) versus weak (mentioned in 2 interviews)

3. Allow transcript drill-down: Users should be able to click through to read full context

4. Highlight limitations: If the system finds conflicting evidence, it should surface that tension

5. Version control: Track when transcripts were added so researchers know what data informed each answer

Human-in-the-Loop Analysis

RAG should accelerate research, not replace researcher judgment. The ideal workflow:

- RAG generates initial findings with supporting quotes

- Researcher reviews the evidence and reads full transcripts for key quotes

- Researcher refines queries based on what RAG surfaces

- RAG helps explore unexpected patterns the researcher wants to investigate

- Researcher synthesizes final insights with human judgment

Think of RAG as an infinitely patient research assistant that's read every interview but still needs your expertise to interpret what matters.

Practical Implementation Considerations

Data Preparation

Before RAG can work well, transcripts need structure:

Clean formatting: Remove interviewer prompts or mark them clearly

Consistent metadata: Tag each interview with participant demographics, date, product version, etc.

Quality transcription: Poor transcription quality leads to poor retrieval

Chunking strategy: Decide whether to chunk by speaker turn, by paragraph, or by semantic topic

Choosing the Right Embedding Model

Different models excel at different tasks:

General-purpose models (like OpenAI's text-embedding-3-large): Good for most research use cases

Domain-specific models: Better if you have highly technical or medical content

Multilingual models (like Cohere's embed-multilingual): Essential for cross-language research

Vector Database Options

Popular choices for research RAG:

Pinecone: Managed service, easy to set up, handles scale well

Weaviate: Open source, strong metadata filtering

Qdrant: Fast, efficient vector similarity search

Postgres with pgvector: Simple if you already use Postgres

Cost Considerations

RAG has two main cost drivers:

Embedding costs: Charged per token when converting transcripts to vectors (usually under $50 for 500 interviews)

Query costs: Charged per API call to the language model (typically $0.01-$0.10 per query)

For most research teams, the cost savings from analyst time far exceed the API costs.

Key Takeaways

- Keyword search fails for qualitative data because participants use varied, natural language rather than standardized terms

- Vector embeddings enable semantic search by converting text into mathematical representations that capture meaning, not just exact words

- RAG combines retrieval and generation to answer questions grounded exclusively in your interview transcripts, avoiding hallucination

- Research-specific RAG requires citation tracking, multi-document synthesis, and metadata filtering — features generic chatbots lack

- Multilingual embeddings allow cross-language analysis so you can query interviews in any language and see patterns across global research

Synthesize Labs lets you chat with your research data. Ask follow-up questions across all interviews and get answers backed by direct quotes. Learn more.

Related Articles

The End of Bad Survey Data: How AI Fraud Detection Works

Traditional panels have 20-40% bad data. Learn how AI-powered fraud detection uses behavioral analysis and coherence scoring to eliminate low-quality responses automatically.

Qual at Quant Scale: How AI Interviews Bridge the Research Gap

AI-powered interviews combine the depth of qualitative research with the scale of quantitative studies. Learn how this changes the research landscape.

Running Global Research in 100+ Languages Without Translation Agencies

Conduct AI-powered interviews in any language and get synthesized results instantly. Learn how multilingual AI research eliminates translation bottlenecks.

Written by Synthesize Labs Team

Published on January 28, 2026